Post ContentAnthropic’s new AI tool deploys multiple agents to scan pull requests and flag software bugs. (Image: Anthropic)

Frontier artificial intelligence (AI) lab Anthropic is rolling out new AI tools and upgrades in quick succession. On Monday, March 9, the company introduced what it calls ‘Code Review’ for Teams and Enterprise users. Code Review is essentially a new tool that deploys AI agents in teams to catch bugs in completed blocks of code before human reviewers see them.

Admins can turn on the feature per repository, after which it will run in the cloud whenever a pull request is opened. Anthropic claimed that the feature is run on nearly every pull request at Anthropic. In software engineering, a pull request is a method that allows developers to make changes to a codebase – the complete collection of source code of a functional software product. It also allows them to request those changes be reviewed and merged into the main project. In simple words, pull requests allow developers to review code before it is integrated into the main codebase.

Also Read | 10574127

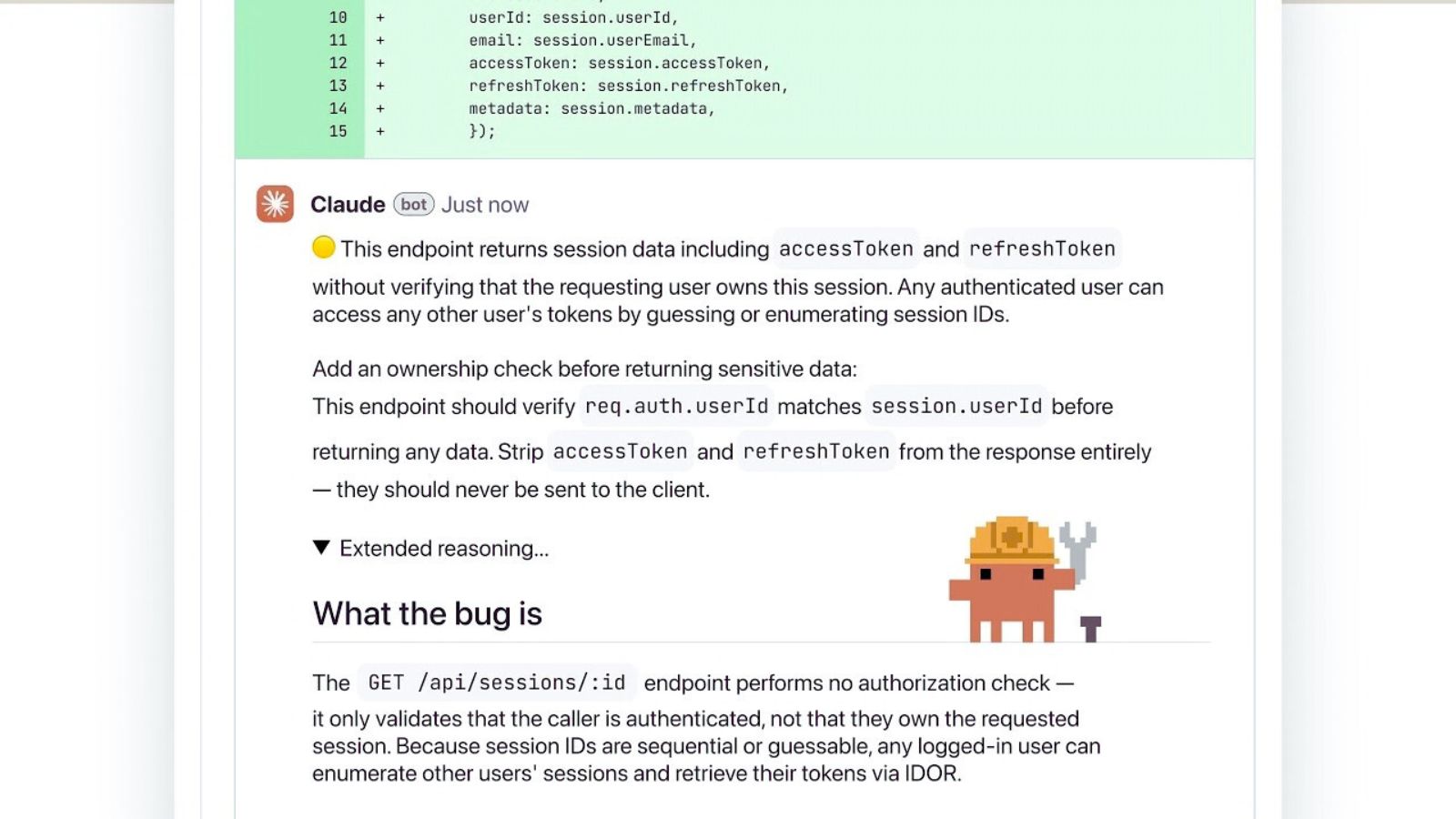

When a pull request is opened, Code Review dispatches a team of AI agents who look for bugs and verify them to filter out false positives and rank them by their severity. The outcome is shown as a single high-signal overview comment along with in-line comments for specific bugs.

“Code output per Anthropic engineer has grown 200% in the last year. Code review has become a bottleneck, and we hear the same from customers every week. They tell us developers are stretched thin, and many PRs (pull requests) get skims rather than deep reads,” the company said in its official release.

Since code review was becoming a hurdle, the company said it needed a reviewer it could trust on every pull request. According to Anthropic, Code Review is an outcome of this bottleneck. The feature offers deep, multi-agent reviews that catch bugs often missed by human reviewers. The company acknowledged that although it is more thorough, it is a more expensive option than its existing Claude Code GitHub Action, which is open-source and available.

“We run code reviews on nearly every PR at Anthropic. Before, 16% of PRs got substantive review comments. Now 54% do. It won’t approve PRs – that’s still a human call – but it closes the gap so reviewers can actually cover what’s shipping,” the company shared in its official blog.

Also Read | Anthropic sues to block Pentagon blacklisting over AI use restrictions

When it comes to pricing, code reviews are billed as per token usage. According to Anthropic, a review generally costs between $15 and $25 on average. However, admins can set monthly limits and deploy an analytics dashboard to monitor how many pull requests are reviewed and accepted and their cost.

Reactions to Claude Code Review

Story continues below this ad

The rollout of Claude Code Reviews has triggered numerous reactions on X and Reddit. While many lauded the feature for its efficiency in catching bugs that may go unnoticed, some expressed apprehension over potential job displacements. Fans of vibe coding, an AI-assisted software development phenomenon, seem to be elated, for them now AI writes the code, it reviews the code, and it also secures it. This is a marked shift, as earlier AI has been mostly dubbed a copilot to assist developers; now it is capable of writing code, reviewing it and checking and fixing bugs, largely rendering development roles optional.

The Dario Amodei-led AI startup has been launching products and focused on agent-driven AI and enterprise automation. Earlier this year, the company introduced Claude Opus 4.6 and Sonnet 4.6, its advanced AI models aimed at improving long-document analysis and computer-use-related tasks. The company also introduced Claude Code Security, a tool that uses Opus 4.6 to identify zero-day vulnerabilities in codebases.