Post Content A recent analysis of AI Overviews found they were accurate approximately 9 out of 10 times. (Image: New York Times)

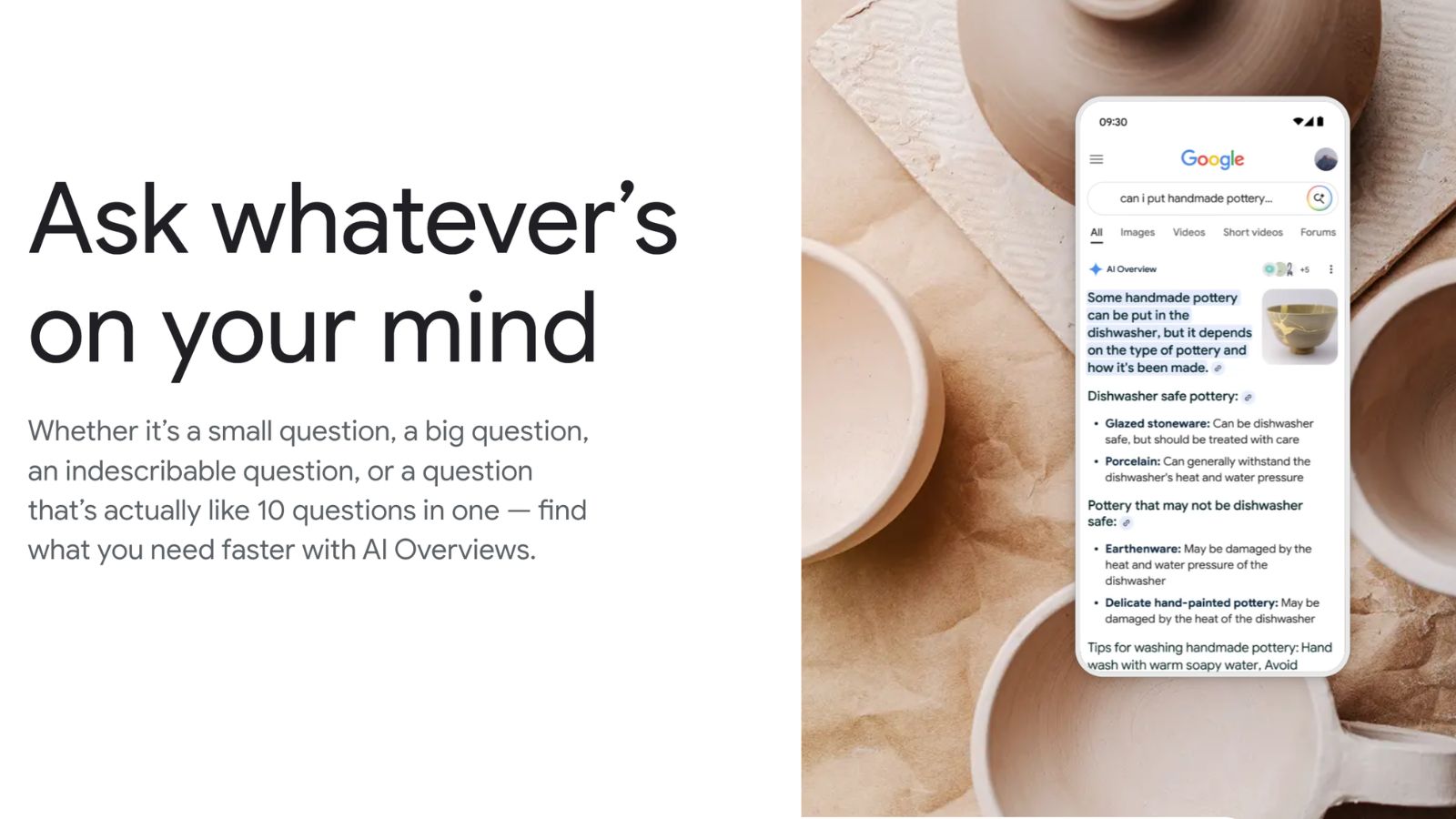

In 2024, Google started giving artificial intelligence-generated answers prime placement at the top of its search results page. The new product, AI Overviews, helped transform Google from a curator of information into a publisher.

A recent analysis of AI Overviews found they were accurate approximately 9 out of 10 times. But with Google processing more than 5 trillion searches a year, this means it provides tens of millions of erroneous answers every hour, according to an analysis done by an AI startup called Oumi.

More than half the accurate responses were “ungrounded,” meaning they linked to websites that did not completely support the information they provided. This makes it challenging to check AI Overviews’ accuracy.

Some technologists argue that Google’s AI Overviews are reasonably accurate and that they have improved in recent months. But others worry the average person may not realize those results need double-checking.

At the request of The New York Times, Oumi analyzed the accuracy of Google’s AI Overviews using a benchmark test called SimpleQA, which is widely used across the industry to measure the accuracy of AI systems. The startup tested Google’s system in October, when the most complex questions were answered using an AI technology called Gemini 2, and then again in February, after it was upgraded to Gemini 3, a more powerful AI technology.

In both cases, Oumi’s analysis focused on 4,326 Google searches. The company found that the results were accurate 85% of the time with Gemini 2 and 91% of the time with Gemini 3.

Google acknowledges that its AI Overviews can include errors.

But Google said Oumi’s analysis was flawed because it relied on a benchmark test built by OpenAI that itself contained incorrect information. “This study has serious holes,” Ned Adriance, a Google spokesperson, said in a statement.

Story continues below this ad

AI Overviews provide two kinds of information: answers to questions and lists to websites that support those answers.

Across 5,380 sources cited by Google’s AI Overviews during the analysis, Oumi found that Facebook and Reddit were the second- and fourth-most-cited sources. When Google’s AI Overviews were accurate, they cited Facebook 5% of the time. When they were inaccurate, they cited Facebook 7% of the time.

To determine the accuracy of AI systems, companies like Oumi use their own AI systems to verify each answer. The problem with this method is that the AI system doing the checking can also make mistakes.