Post Content

When a financial services company recently began using Cursor, an artificial intelligence technology that writes computer code, the difference that it made was immediate.

The company went from producing 25,000 lines of code a month to 250,000 lines. That created a backlog of 1 million lines of code that needed to be reviewed, said Joni Klippert, a co-founder and the CEO of StackHawk, a security startup that was working with the financial services firm.

“The sheer amount of code being delivered, and the increase in vulnerabilities, is something they can’t keep up with,” she said. And as software development moved faster, that forced sales, marketing, customer support and other departments to pick up the pace, Klippert added, creating “a lot of stress.”

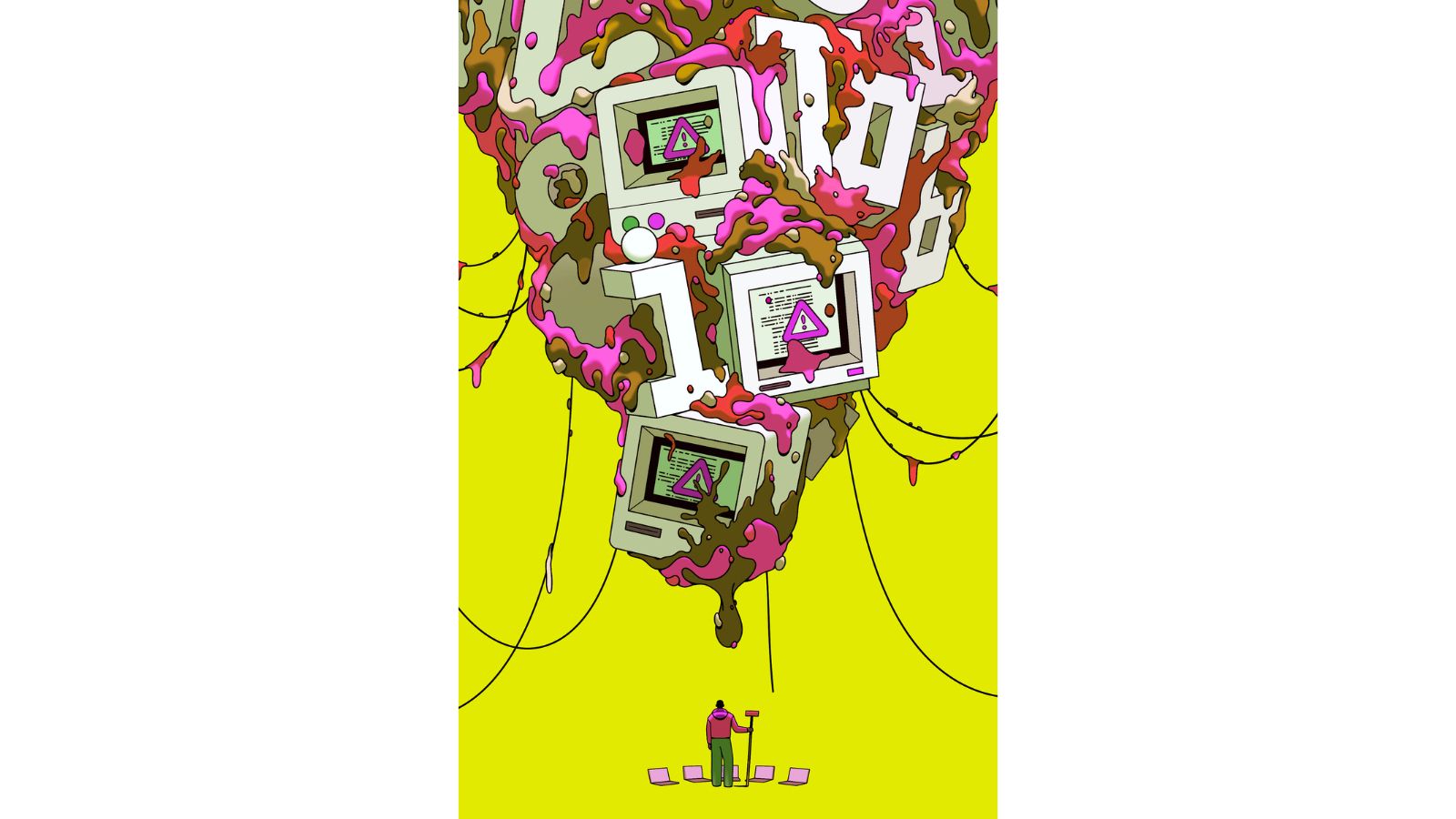

Since AI coding tools from Anthropic, OpenAI, Cursor and other companies took off last year, one result has now become apparent: code overload.

Aided by these tools, tech workers are producing so much code so quickly that it has become too much to handle. With anyone — not just engineers — able to spin up software ideas in a matter of hours, companies are trying to figure out how to deal with the glut.

In Silicon Valley, many tech workers see this moment as a new reality they must adapt to as companies incorporate AI tools into daily work. Some said the tools granted them coding superpowers, allowing them to spend more time coming up with software ideas instead of doing the arduous work of building it.

At the same time, there are not enough engineers to review the explosion of code for mistakes. Recruiters are increasingly looking to hire senior engineers who have experience spotting errors in code and can monitor the software for risks. Open source software projects, which anyone can contribute to, have been inundated with AI-enabled additions. And sometimes flaws in the code can lead to security vulnerabilities or software that crashes.

Story continues below this ad

“The blessing and the curse is that now everyone inside your company becomes a coder,” said Michele Catasta, the president and head of AI at Replit, an AI coding startup in Foster City, California.

In a survey released by Google in September, the company found that 90% of software developers reported using AI to help them work, while 71% who write code used AI to help them.

The widespread use of the tools has led to fears that AI can replace many engineers. Tech companies including Pinterest, Block and Atlassian have cut thousands of jobs in recent months, citing efficiencies created by AI.

“Projects that once required hundreds of engineers can now be done by tens,” Andrew Bosworth, Meta’s chief technology officer, told employees this year in an internal memo, which was reviewed by The New York Times. “Work that used to take months can now take days.” He added that AI had “profound consequences for how organizations like Meta should work.”

Story continues below this ad

Not long ago, the process of turning ones and zeros into computer programs was very different. Engineers pored over complicated computer languages to commit them to memory. They might write a few dozen lines of vetted, bulletproof code a day.

But AI advancements brought the rise of agents, a kind of AI-powered robot that can create software largely on its own. Early versions of these agents, including from startups like Cursor, showed promise. Then in November, coding agents leveled up. Anthropic and OpenAI, the leading AI startups, released updated versions of the software that powers their respective coding tools, Claude Code and Codex.

The change, tech workers soon discovered, upgraded the agents from occasionally helpful engineering partners to full-fledged code-generating wizards. With just a little human guidance, an engineer could set an AI agent loose writing a program in a fraction of the time that human coders would need. What came next was a deluge of code.

Many tech companies are now dealing with the ripple effects. Someone has to review the AI-generated code to test it for bugs, security and compliance. But it can sometimes be unclear whose job it is to fix issues created by AI-generated code. In the past, it would be the responsibility of the person who created the code.

Story continues below this ad

Companies are struggling to hire enough people to monitor the AI code for risks, a role called application security engineer. “There are not enough application security engineers on the planet to satisfy what just American companies need,” said Joe Sullivan, an adviser to Costanoa Ventures, a Silicon Valley venture firm. The large companies he works with would add five to 10 more people in this role if they could, he said.

Other problems are quirkier. AI coding tools work better on laptops than in web-based environments stored on secure servers owned by companies like Amazon and Microsoft. That means more engineers are downloading their entire company’s code to their laptops, creating a security risk if the laptop goes missing, Sullivan said.

“That’s an example of a crazy risk no one thought of six months ago that they’re trying to solve right now,” he said.

Sachin Kamdar, a co-founder of Elvex, an AI agent startup, said he created a rule around 16 months ago that all of the company’s code needed to be reviewed by a human. Otherwise, problems would be harder to fix because no one would understand the work that AI had done.

Story continues below this ad

“It’s just going to break something, and they’re not going to know why it broke,” he said.

At companies that accept code contributions, the AI effect has become clear. Steve Ruiz, founder of the digital whiteboard startup Tldraw, said he first noticed last fall that more people were trying to add to the code base of his company. (Tldraw licenses its technology, but it publishes its code and takes contributions to it.)

Ruiz said the new contributors acted oddly. Some did all the work but abandoned the code just before signing a form at the end of the process. Others ignored clear instructions or contributed a spammy barrage of updates.

Ruiz concluded the contributors were probably AI bots, which were too much to manage. In January, he closed Tldraw to outsiders.

Story continues below this ad

“The risk to the code base was very high,” he said, adding that the onslaught could have put his team, its community and the project’s reputation in jeopardy. Open source projects and coding platforms like GitHub are figuring out how to handle the new reality, he said.

For some in Silicon Valley, the solution to the code bloat seems obvious: more AI.

Anthropic and OpenAI pointed to recent product releases, including their AI-powered software review agents that are used to spot errors in code. (The Times has sued OpenAI and Microsoft for copyright infringement of news content related to AI systems. The companies have denied those claims.)

In December, Cursor bought Graphite, a startup that builds code-reviewing bots. The company is incorporating Graphite’s technology into an offering to help engineers prioritize the most sensitive code that needs vetting.

Story continues below this ad

Tido Carriero, Cursor’s head of engineering, product and design, said the most advanced companies had figured out what to do about the AI-generated code eruption and were now focused on adapting their businesses to this new way of working. Cursor is building products to help with that, too, he said.

“The software development factory kind of broke,” he said. “We’re trying to rearrange the parts in some sense.”